1) Track up to five people's poses in real-time

Like to dance? You and some friends can practice matching the moves of your favorite band! Using a P90-powered smartphone it can capture and track your moves in real-time. From there, using MediaTek's AI-enhanced depth mapping, apply AR overlays to create your own fresh and inventive music video.

2) Full body avatar AR

Facial animoji was just the first step; 2019 is about the full-body avatar. Powering the next generation of online AR experiences, track your whole body movements and become your online avatar in real-time. Great fun for recording friends or streaming yourself to fans.

3) 3D pose tracking

Bringing 3D capture into the hands of everyone. Below you can see the robots mimicking the model's movements in real-time. This opens the door from everything from entertainment to education; use it in animation, game or service development or export it to a 3D printer and easily create real life models.

4) Multiple Object Detection

The P90 can now detect and identify multiple objects in a single scene, in real-time, adding convenience to apps and services that rely on visual identification - like translations.

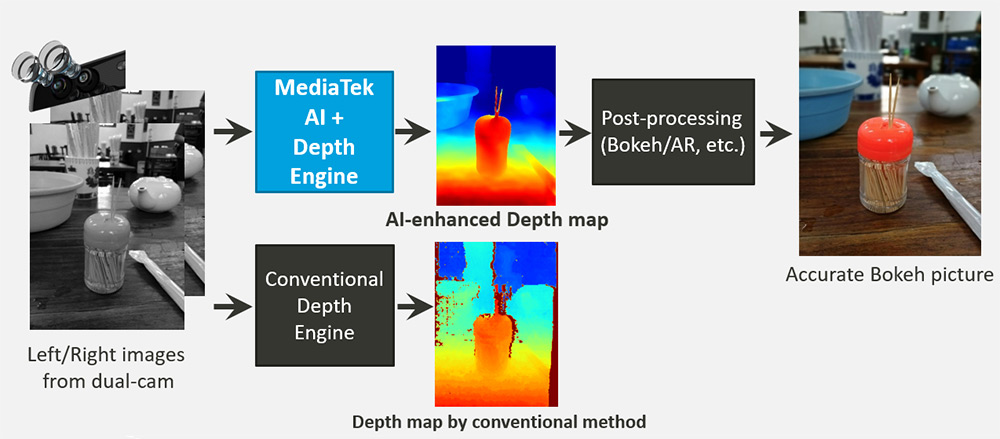

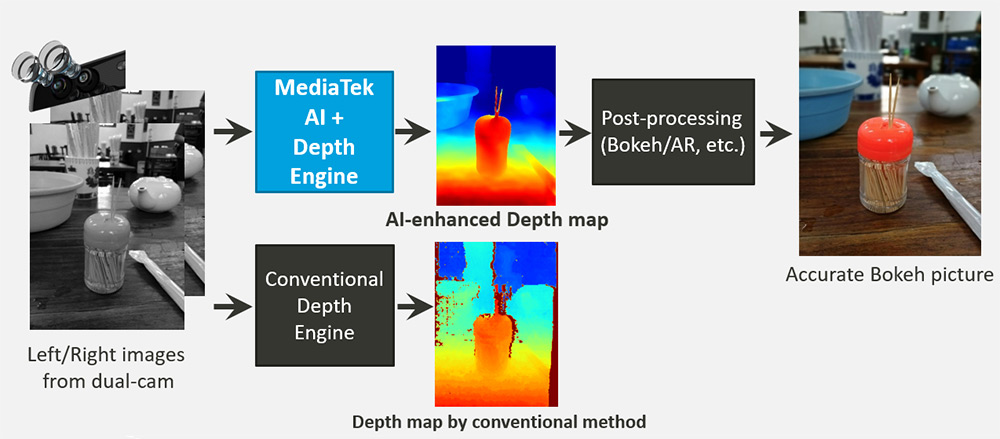

5) AI depth sensing

Portrait photography with bokeh effects has become the must-have feature in modern smartphones. This requires a high performance depth engine to separate the subject from the background. MediaTek has now added AI-enhanced edge detection to its powerful hardware depth engine giving the P90 a real sense of vision that provides even more accuracy, even in difficult scenarios like low-contrast or low-light environments. This means you the best portrait pictures ever, anytime you decide to take one.

6) AI Noise Reduction

We've created a new noise reduction algorithm (AI:NR) that's faster and more effective than ever, improving the low-light camera performance so you can capture when and wherever you like.

7) Video call quality enhancement

By integrating AI facial detection and intelligent scene detection into a video compression algorithm, video calls and livestreams will automatically tune the encoding priority to focal areas within a scene, such as faces, people or objects of interest, ensuring the best experience even if the call or stream has limited data bandwidth. In the picture below this is demonstrated by the circle, which show the noticeably better quality in the focal encoding region.

8) Google Lens and ARCore support

Bringing the great Google Lens apps, visual search and Google ARCore intelligence to our P90 means developers can easily build on powerful experiences that are Android-native.

9) MediaTek NeuroPilot software ecosystem

We’re meeting the Edge AI challenge head-on with MediaTek NeuroPilot. It focuses on enabling high performance and power efficiency for AI features and applications, through a software ecosystem. Developers can target the APU 2.0 directly or let the MediaTek NeuroPoint SDK intelligently handle the processing allocation for them. Applications can be built using common frameworks such as TensorFlow, TF Lite, Caffe, Caffe2 Amazon MXNet, Sony NNabla, or other custom 3rd party frameworks. At the API level for Android OS, Google Android Neural Networks API (Android NNAPI) and MediaTek NeuroPilot SDK are supported. The NeuroPilot SDK extends the Android NNAPI allowing developers and device makers to bring their code closer-to-metal for better performance and power-efficiency.