- Home

- Braemac Blog

- What is Sensor Fusion?

What is Sensor Fusion?

About Hope Carpenter

Hope Carpenter is a Digital Marketing Specialist at the Exponential Technology Group (XTG). With a background in journalism and marketing, she brings a research-driven, detail-oriented approach to technical content development within the electronic components industry. Her work focuses on translating complex engineering concepts into clear, accurate digital content that supports engineers and technical decision-makers, while strengthening brand visibility across XTG.

Autonomous systems process vast amounts of sensor data. Simultaneous streams from cameras, IMUs, radar, LiDAR, and other sensors compound complexity. No individual sensor can see “everything”, so systems aggregate data from multiple sensors to achieve comprehensive models. Sensor fusion takes these inputs and turns them into a single, reliable understanding of the environment, letting one sensor compensate for another’s weaknesses. As edge AI and real-time perception stacks continue to evolve, sensor fusion drives the accuracy, safety, and scalability required for next-generation autonomous systems.

Understanding Sensor Fusion Techniques

At a high level, sensor fusion relies on structured algorithms to manage noise, uncertainty, and timing differences, often using techniques such as Kalman filtering. The Kalman filter (KF) is a foundational tool for combining sensor measurements, and it remains a core example of classical sensor fusion. The KF estimates a system’s true state by weighting measurements based on uncertainty for linear systems. Its extensions, the Extended Kalman Filter (EKF) and Unscented Kalman Filter (UKF), handle non-linear systems in different ways: the EKF does so by linearizing around the current estimate, while the UKF uses a set of strategically chosen sample points to improve accuracy.

Probabilistic approaches such as Bayesian inference and particle filters, enable systems to manage complex or uncertain data, producing robust state estimates even when measurements are noisy or partially missing. AI-driven fusion with neural networks further advance object-level fusion and learned feature extraction by supporting real-world object detection and classification.

Compared to single-sensor systems, which are limited by noise, blind spots, or environmental constraints, sensor fusion improves accuracy, reliability, and situational awareness. Each fusion approach comes with tradeoffs:

| Sensor Fusion Approach | Advantages | Tradeoffs |

| Classical filters (KF, EKF, UKF) | Efficient and predictable | Less flexible for complex or highly non-linear data |

| Probabilistic methods (Bayesian inference, particle filters) | Robust to noise and uncertainty | Higher computational cost |

| AI-driven fusion (neural networks, learned features) | Handles high-dimensional data, advanced perception | High processing power and energy required |

Selecting the optimal method depends on the system’s accuracy, latency, and processing constraints.

Key Benefits

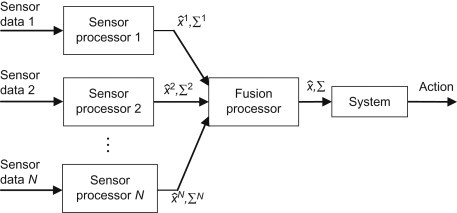

Source: Science Direct

Sensor fusion combines data from multiple sensors into a single, consistent view of the environment. Cross-validating measurements increases accuracy while reducing the impact of noise or temporary sensor degradation.

Cross-sensor validation adds redundancy and fault tolerance, allowing systems to continue operating even if one sensor fails or becomes obstructed. At the same time, integrating motion, position, and environmental data improves spatial awareness and localization, which is critical for safe navigation and precise operation.

From a design standpoint, sensor fusion enables better bill of material (BOM) efficiency by distributing performance across multiple sensors rather than relying on a single high-cost component. Fusing data earlier in the processing pipeline also reduces downstream computational load and supports interoperability across different sensor types and vendors.

Three Levels of Sensor Fusion

Sensor fusion types are commonly categorized by the stage at which data from multiple sensors is combined. To support different system requirements, fusion can occur at multiple levels. Low-level fusion works with raw sensor data for maximum detail but requires more processing power. Mid-level fusion balances accuracy and efficiency by combining extracted features, while high-level fusion merges sensor outputs to support decisions with lower computational demand. These levels give designers flexibility in choosing the right trade-offs between detail, performance, and resource constraints.

| Sensor Fusion Type | Description | Typical Characteristics |

| Low-Level Fusion | Combines raw sensor data into a detailed environmental view | Highest data fidelity, high processing load |

| Mid-Level Fusion | Integrates processed sensor features | Balanced accuracy and efficiency |

| High-Level Fusion | Merges sensor outputs for decisions or predictions | High abstraction, low processing load |

How Sensor Fusion is Used Today

Sensor fusion is used in a variety of real-world systems. In consumer devices like smartphones, companies such as Apple use dedicated sensor hubs to combine accelerometer, gyroscope, and magnetometer data, enabling always-on motion tracking, navigation, and contextual awareness without overloading the main processor or draining battery life.

Unmanned Aerial Vehicles (UAVs) also rely on fusion for aerial mapping and surveying. Integrating IMU, LiDAR, and GNSS/INS data, teams can more than double the vertical accuracy of 3D surveys, unlocking precise spatial data for urban mapping and infrastructure inspections.

Intelligent building systems benefit as well, using fused temperature, humidity, and environmental data to adjust conditions in real time, optimize maintenance, and drive smarter facility-wide decision-making.

Accelerate Sensor Fusion Development with Braemac

Braemac gives engineers the tools they need to develop high-performance sensor fusion systems. With an extensive electronic component portfolio that includes solutions from best-in-class suppliers specializing in cutting-edge sensors and sensor hubs, our unique offering is well-suited for embedded, UAV, robotics, and industrial platform innovation.

By integrating these technologies into a unified platform, systems can operate with greater accuracy, reliability, and efficiency, enabling smarter decision-making, reducing development time, and supporting robust, real-world performance across a wide range of applications.

Inertial Measurement Units

Inertial measurement units, or IMUs, combine gyroscopes, accelerometers, and magnetometers to capture detailed information about motion, orientation, and spatial position. When integrated into sensor fusion systems, IMUs provide critical motion and orientation data that–combined with other sensors like GNSS receivers or environmental sensors–enable highly accurate navigation, stabilization, and tracking across a variety of applications.

TDK InvenSense IAM-20380

The TDK InvenSense IAM-20380 is a 3-axis gyroscope designed to simplify motion integration by replacing costly discrete components with a single, factory-calibrated 3x3x0.75 mm package. It features a programmable full-scale range up to ±2000 dps, on-chip 16-bit ADCs, and a 512-byte FIFO that allows efficient burst-reading to minimize system power consumption. By combining high-precision sensing with flexible I²C and SPI interfaces, the IAM-20380 enables manufacturers to achieve optimal stabilization and tracking performance in compact autonomous and industrial platforms.

TDK InvenSense IAM-20381

The TDK InvenSense IAM-20381 is a 3-axis MotionTracking accelerometer designed for automotive applications, providing a pre-qualified alternative to complex discrete device selection. Housed in a compact 3x3x0.75 mm LGA package, it features a user-programmable full-scale range of ±2g to ±16g and integrated 16-bit ADCs for high-resolution motion sensing. With factory-calibrated sensitivity and an integrated 512-byte FIFO for efficient burst-data processing, the IAM-20381 ensures optimal performance and reduced power consumption for safety and navigation systems.

GNSS Receivers

Global Navigation Satellite System (GNSS) receivers provide precise timing and location information by tracking signals from multiple satellites. They deliver accurate positioning, navigation, and timing data, which is critical for applications ranging from autonomous systems to industrial and infrastructure monitoring. When combined with other sensors, GNSS receivers enhance spatial awareness, support real-time decision-making, and improve overall system reliability for applications like autonomous vehicles, drones, and smart infrastructure.

u-blox ZED-F9

The ZED-F9P series from u-blox is a line of high-precision GNSS modules designed to deliver centimeter-level accuracy for industrial and consumer-grade automation. The ZED-F9Pserve as the standard for L1/L2 multi-band positioning, offering integrated moving base support for UAVs and attitude sensing. Meanwhile, the ZED-F9P-15B provides a specialized L1/L5 alternative, giving mobile robotics an effective frequency path in modern signal environments. By integrating RTK and PPP-RTK technologies, all four modules allow moving machinery to achieve professional-grade navigation in a compact surface-mount form factor.

u-blox NEO-D9

The u-blox NEO-D9S-00B and NEO-D9C-00B are specialized data receivers that enable high-precision GNSS by decoding satellite correction streams. The NEO-D9S acts as the essential link for PointPerfect Flex via L-band satellite, providing a reliable alternative to internet-based corrections with over 99.9% uptime. Meanwhile, the NEO-D9C supports the Japanese QZSS L6 band, offering access to free CLAS and MADOCA services via two concurrent L6 reception channels. Both modules allow high-precision receivers to achieve centimeter-level accuracy without the need for terrestrial data links.

Multi-Sensor SoCs

Multi-sensor system-on-chips, or SoCs, combine processing, sensor management, and connectivity in a single, compact platform. By integrating multiple sensor inputs, motion, environmental, and air quality, these SoCs can measure, analyze, and preprocess data locally, reducing reliance on external processors or the Cloud.

Multi-sensor SoC integration delivers high efficiency, low power consumption, and real-time performance, making multi-sensor SoCs ideal for applications such as Industrial IoT, predictive maintenance, smart wearables, and building automation. They allow developers to collect multiple measurements simultaneously, configure sensor behavior dynamically, and implement complex sensing tasks with minimal hardware overhead.

Nordic Semiconductor nRF52840

The nRF52840 from Nordic Semiconductor is a high-performance multiprotocol SoC designed to power complex industrial and consumer-grade sensor platforms via its 64 MHz Arm Cortex-M4 CPU. Integrated in Sensry’s Kallisto module, this SoC enables the simultaneous detection and preprocessing of 20 different measurands, including high-frequency vibration for electric motor monitoring and ambient air quality, using the power-efficient Zephyr RTOS.

When paired with the nPM1100 PMIC, the nRF52840 supports local sensor data storage for long-term monitoring without a cloud connection and can be powered via USB or wireless Qi charging. These combined features allow developers to create autonomous, highly efficient multi-sensor devices that operate reliably without the need for terrestrial data links.

Recommended Reading

Resources

Sensor Fusion Frequently Asked Questions

What is sensor fusion?

Sensor fusion is the process of combining data from multiple sensors to create a single, reliable view of the environment. By integrating inputs from different sources, sensor fusion improves measurement accuracy and enables smarter system decisions than relying on a single sensor alone.

Sensor fusion is the process of combining data from multiple sensors to create a single, reliable view of the environment. By integrating inputs from different sources, sensor fusion improves measurement accuracy and enables smarter system decisions than relying on a single sensor alone.

Why is sensor fusion important?

Sensor fusion is important because it allows systems to operate more reliably and efficiently. By combining multiple sensor inputs, systems gain redundancy, fault tolerance, and higher confidence in measurements, which is critical for applications like autonomous devices, industrial monitoring, and wearable technology.

Sensor fusion is important because it allows systems to operate more reliably and efficiently. By combining multiple sensor inputs, systems gain redundancy, fault tolerance, and higher confidence in measurements, which is critical for applications like autonomous devices, industrial monitoring, and wearable technology.

How does sensor fusion improve spatial awareness and localization?

Sensor fusion improves spatial awareness and localization by merging data from motion, position, and environmental sensors. This helps systems understand where they are and what surrounds them, which is essential for navigation, obstacle avoidance, and precise operation in real-world environments.

Sensor fusion improves spatial awareness and localization by merging data from motion, position, and environmental sensors. This helps systems understand where they are and what surrounds them, which is essential for navigation, obstacle avoidance, and precise operation in real-world environments.

What are the benefits of early sensor data fusion?

The benefits of fusing sensor data early in the processing pipeline reduces the need for extensive downstream computation. Early fusion provides cleaner, more structured data, which simplifies integration, improves interoperability across sensor types, and supports faster, more reliable decision-making.

The benefits of fusing sensor data early in the processing pipeline reduces the need for extensive downstream computation. Early fusion provides cleaner, more structured data, which simplifies integration, improves interoperability across sensor types, and supports faster, more reliable decision-making.

Where is sensor fusion commonly used?

Sensor fusion is commonly used wherever multiple measurements need to be combined for smarter operation. Common applications include consumer electronics like smartphones and wearables, industrial monitoring, predictive maintenance, building automation, and environmental sensing.

Sensor fusion is commonly used wherever multiple measurements need to be combined for smarter operation. Common applications include consumer electronics like smartphones and wearables, industrial monitoring, predictive maintenance, building automation, and environmental sensing.

How does sensor fusion support fault tolerance and redundancy?

Sensor fusion supports fault tolerance and redundancy by cross-checking data from multiple sensors. If one sensor fails or provides noisy data, the system can rely on other sensors to maintain accurate and reliable operation.

Sensor fusion supports fault tolerance and redundancy by cross-checking data from multiple sensors. If one sensor fails or provides noisy data, the system can rely on other sensors to maintain accurate and reliable operation.

Can sensor fusion reduce system costs or complexity?

Yes, sensor fusion can reduce system costs and complexity. By combining the output of multiple, often lower-cost sensors, the system can achieve high accuracy without relying on a single, expensive sensor. It also reduces the need for extensive downstream processing and simplifies integration.

Yes, sensor fusion can reduce system costs and complexity. By combining the output of multiple, often lower-cost sensors, the system can achieve high accuracy without relying on a single, expensive sensor. It also reduces the need for extensive downstream processing and simplifies integration.

Does sensor fusion work in real time?

Sensor fusion can operate in real time, allowing systems to make decisions quickly based on incoming sensor data. Real-time fusion is essential for applications like autonomous vehicles, industrial automation, and wearable devices where immediate responses are required.

Sensor fusion can operate in real time, allowing systems to make decisions quickly based on incoming sensor data. Real-time fusion is essential for applications like autonomous vehicles, industrial automation, and wearable devices where immediate responses are required.

How can Braemac help with sensor fusion solutions?

Braemac can help with sensor fusion by providing access to advanced sensor fusion products, expertise, and integration support. Whether you need IMUs, GNSS receivers, or multi-sensor SoCs, Braemac can guide selection, simplify system design, and ensure that your sensor fusion solutions are reliable, efficient, and tailored to your application needs.

Braemac can help with sensor fusion by providing access to advanced sensor fusion products, expertise, and integration support. Whether you need IMUs, GNSS receivers, or multi-sensor SoCs, Braemac can guide selection, simplify system design, and ensure that your sensor fusion solutions are reliable, efficient, and tailored to your application needs.

Tags:

AI

,

Autonomous Vehicles

,

Edge Computing

,

Embedded

,

Industrial Automation

,

Machine Learning

,

Sensor Fusion

,

Sensors